Everyone posts clean benchmark tables. Here’s what happens when you actually try to reproduce them.

I’ve been running a Mac Studio M4 Max (64GB) as a local LLM server for a couple weeks now. Four models, two backends, and way more debugging than expected. This post documents what I found, including the parts that didn’t work.

The hardware

Mac Studio M4 Max with 64GB unified memory. The unified memory architecture means the GPU and CPU share the same 64GB pool, so large models can actually fit without swapping.

The models

I tested four Qwen models, all 4-bit quantized:

- Qwen3.5 0.8B (dense) - the tiny one, for quick tasks

- Qwen3.5 9B (dense) - general purpose

- Qwen3 30B-A3B (MoE) - 30 billion parameters total, but only 3 billion active per token

- Qwen2.5-Coder 32B (dense) - code-focused model

Why Qwen? They’re solid open models with good performance. Why 4-bit? Because I want to fit multiple models in 64GB and still have room for context.

The MoE model (Mixture of Experts) is the interesting one. It has 30B total parameters, but only activates 3B for any given token. That should make it faster than the 9B dense model despite being 3x larger on disk. We’ll see if that’s true.

Speed benchmarks: Ollama vs MLX

I tested both Ollama (which uses llama.cpp) and vllm-mlx (native MLX implementation). Same models, same test parameters: 2048 token prompt, 32 token generation, 3 runs each. Speed measured with llama-benchy.

Ollama results

| Model | Prompt (tok/s) | Gen (tok/s) | TTFT |

|---|---|---|---|

| Qwen3.5 0.8B | 3,475 | 97.4 | 0.56s |

| Qwen3 30B-A3B (MoE) | 1,197 | 81.5 | 1.47s |

| Qwen3.5 9B | 667 | 37.9 | 2.60s |

| Qwen2.5-Coder 32B | 197 | 21.5 | 8.67s |

The 0.8B model is fast (97 tokens/second) but useless. It gets basic facts wrong.

The MoE model generates at 81.5 tok/s. The 9B dense model? Only 37.9 tok/s. The MoE is 2.1x faster despite having 3x more total parameters. That’s the MoE advantage: most of the model stays dormant.

The Coder 32B model is slowest at 21.5 tok/s, but that’s still readable. Anything over 20 feels acceptable in practice, in my opinion.

MLX results

| Model | Gen (tok/s) | Peak (tok/s) | TTFT |

|---|---|---|---|

| Qwen3.5 0.8B | 370.95 | 425.94 | 0.32s |

| Qwen3-Coder 30B-A3B (MoE) | 112.94 | 118.65 | 1.29s |

| Qwen3.5 9B | 82.53 | 87.99 | 2.41s |

| Qwen2.5-Coder 32B | 23.50 | 24.00 | 9.66s |

MLX is consistently faster. The speedup varies by model size:

- 0.8B: 3.8x faster (97 → 371 tok/s)

- 30B MoE: 1.4x faster (82 → 113 tok/s)

- 9B: 2.2x faster (38 → 83 tok/s)

- 32B: 1.1x faster (22 → 24 tok/s)

Small models benefit most from MLX’s Apple Silicon optimizations. Large models hit memory bandwidth limits on both backends, so the gap narrows.

The clear winner for interactive use is vllm-mlx. The 30B MoE model at 113 tok/s feels instant.

Quality benchmarks: the three-attempt saga

Speed is easy to measure. Quality is where it got messy.

I used lm-evaluation-harness to test coding ability (HumanEval, MBPP) and math reasoning (GSM8K). MBPP (Mostly Basic Python Programming) is a dataset of 974 crowd-sourced Python programming problems. GSM8K is a set of 8,500 grade school math word problems. Standard benchmarks, should be straightforward.

It was not straightforward.

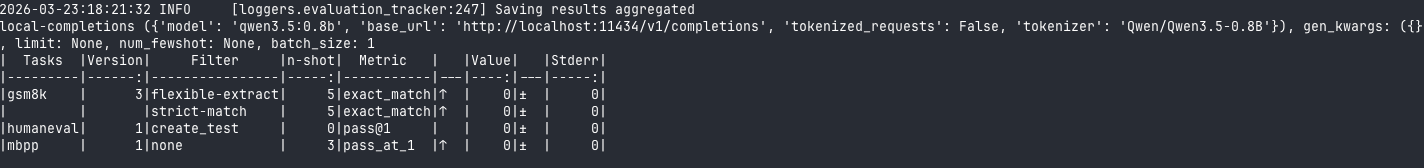

Attempt 1: wrong endpoint

First attempt used the local-completions endpoint. This sends raw text to the model without chat formatting.

Results:

| Model | HumanEval | MBPP | GSM8K (flex) | GSM8K (strict) |

|---|---|---|---|---|

| 0.8B | 0% | 0% | 0% | 0% |

| 9B | 0% | 0% | 0% | 0% |

| 30B MoE | 0% | 0% | 0.53% | 0.38% |

| Coder 32B | 0% | 0% | 68.23% | 46.85% |

Almost all zeros. The Coder 32B got 68% on GSM8K, which proved the model works and the API responded. But HumanEval and MBPP both failed completely.

The problem: these are chat/instruct models. They expect messages in a specific format. Raw text completion doesn’t match what the benchmark harness expects for code output.

Attempt 2: chat endpoint

I switched to local-chat-completions with proper message formatting.

| Model | HumanEval | MBPP |

|---|---|---|

| Coder 32B | 0% | 76.2% ± 1.91% |

| 9B | 0% | 9.6% ± 1.32% |

| 30B MoE | 0% | 0% |

MBPP came alive. Coder 32B hit 76.2%, which is a believable number for a code-focused model. The 9B got 9.6%, also reasonable for a general model.

HumanEval still returned 0% across the board. On every model. Including Coder 32B, which is specifically trained for code and did well on MBPP.

Something was broken with HumanEval specifically.

Attempt 3: the think-tag rabbit hole

Qwen3 models have a <think> mode where they output reasoning inside special tags. I had a theory: maybe these tags were polluting the code output and causing HumanEval to fail parsing.

I built a FastAPI proxy server that strips <think>...</think> tags from responses and pointed the benchmark harness at the proxy instead of directly at the model.

The think-strip proxy code

import re, json, httpx

from fastapi import FastAPI, Request

from fastapi.responses import JSONResponse

app = FastAPI()

UPSTREAM = "http://localhost:11434"

_RE_THINK_COMPLETE = re.compile(r"<think>[\s\S]*?</think>\s*", re.DOTALL)

_RE_THINK_OPEN = re.compile(r"<think>[\s\S]*$", re.DOTALL)

def strip_thinking(text: str) -> str:

text = _RE_THINK_COMPLETE.sub("", text)

text = _RE_THINK_OPEN.sub("", text)

return text.strip()

@app.api_route("/{path:path}", methods=["GET", "POST", "PUT", "DELETE"])

async def proxy(path: str, request: Request):

url = f"{UPSTREAM}/{path}"

body = await request.body()

headers = {

k: v for k, v in request.headers.items()

if k.lower() not in ("host", "content-length")

}

async with httpx.AsyncClient(timeout=600.0) as client:

response = await client.request(

method=request.method, url=url,

content=body, headers=headers

)

try:

data = response.json()

if "choices" in data:

for choice in data["choices"]:

if "message" in choice and "content" in choice["message"]:

choice["message"]["content"] = strip_thinking(

choice["message"]["content"]

)

if "text" in choice:

choice["text"] = strip_thinking(choice["text"])

return JSONResponse(content=data, status_code=response.status_code)

except (json.JSONDecodeError, KeyError):

return JSONResponse(

content=response.text,

status_code=response.status_code

)

if __name__ == "__main__":

import uvicorn

print("think-strip-proxy on :18000 -> Ollama :11434")

uvicorn.run(app, host="0.0.0.0", port=18000)

Results:

| Model | HumanEval | MBPP |

|---|---|---|

| 9B | 0% | 9.6% |

| 30B MoE | 0% | 0% |

Identical to attempt 2. The thinking tags were not the problem.

What actually happened

After hours of debugging, here’s what I learned:

-

HumanEval is broken with the

local-chat-completionsendpoint in lm-evaluation-harness 0.4.11. The stop sequences probably truncate responses before function bodies complete. Every model gets 0%, even strong coders like Qwen2.5-Coder 32B. -

MBPP works correctly via chat-completions. Coder 32B’s 76.2% is a real number.

-

GSM8K works via raw completions. Coder 32B’s 68.23% on math reasoning is solid for a code-focused model.

-

The thinking tags theory was wrong. The proxy experiment proved it. The real issue is prompt format compatibility between the benchmark harness and the chat API.

-

Benchmarking is harder than running the model. Three attempts, multiple configurations, hours of compute time, and I still don’t have working HumanEval numbers.

This is the real homelab experience. The blog posts show clean tables. They don’t show the failed attempts.

What’s MoE and why does it matter?

Mixture of Experts (MoE) is an architecture where the model has many parameter sets (“experts”), but only activates a subset for each token. Think of it like having 10 specialists on call, but only calling 1-2 per question.

The Qwen3 30B-A3B model has 30 billion parameters total, but only activates 3 billion per token. The rest stay dormant.

This gives you most of the quality benefits of a 30B model at the inference speed of a 3B model. In practice, it generated at 113 tok/s on MLX. Faster than the 9B dense model (83 tok/s) despite being 3x larger on disk.

The tradeoff: slightly lower quality than a full 30B dense model, and you still need 18GB of disk space and enough RAM to load all 30B parameters. But for interactive use, the speed gain is worth it.

What I’ll actually run

For now I’m sticking with two models while I test others and wait for better ones to come out:

For daily use: Qwen3 30B-A3B on vllm-mlx. Best balance of speed (113 tok/s) and quality. It handles most tasks well.

For coding: Qwen2.5-Coder 32B on vllm-mlx. Slower (24 tok/s), but the quality on code tasks is noticeably better. It’s worth the wait for complex codegen.

Setup gotchas nobody mentions

The benchmarks are one thing. Getting to the point where I can run them was another.

ISP blocking Cloudflare R2: My ISP blocks Cloudflare R2. Ollama downloads models from R2. Result: dial tcp 172.64.66.1:443: i/o timeout on every model pull. Workaround: VPN exit node through the US. It took me a while to figure out why downloads were failing.

WiFi AP isolation: The ISP router had client isolation enabled so devices on the same network couldn’t talk to each other. No SSH, no SCP, no local API calls. I ended up connecting the Mac Studio via ethernet to transfer model files. I know there are easier ways, but I had to work on my cabling anyway to prepare for the Brume 3 (VPN security gateway).

SCP model transfer: I downloaded models on the laptop (via VPN), then scp -r ~/.ollama/models/ studio:~/.ollama/. Gigabit ethernet got me 50-58 MB/s between Macs. Not fast, but faster than fighting with the ISP.

Qwen 3.5 thinking mode meltdown: I used a snarky system prompt for testing (“be adversarial, challenge assumptions”). The model entered an infinite reasoning loop and flooded Discord with thousands of emoji and repeated text. Neutral system prompts work fine. Don’t provoke the thinking mode.

Trustworthy numbers

After all that, here’s what I can report with confidence:

| Model | Gen (Ollama) | Gen (MLX) | MBPP | GSM8K |

|---|---|---|---|---|

| Qwen3.5 0.8B | 97 tok/s | 371 tok/s | 0% | 0% |

| Qwen3.5 9B | 38 tok/s | 83 tok/s | 9.6% | 0% |

| Qwen3 30B-A3B (MoE) | 82 tok/s | 113 tok/s | 0%* | 0.5% |

| Qwen2.5-Coder 32B | 22 tok/s | 24 tok/s | 76.2% | 68.2% |

*The 30B MoE model’s 0% on MBPP is sus. Likely an eval pipeline issue, not a model quality issue. I’ll get back to this at some point.

Takeaways:

- MLX is faster across the board

- MoE delivers on the speed promise

- Benchmarking local models is harder than running them

- ISPs will absolutely ruin your day in ways you didn’t expect

That’s the real experience of setting up a local LLM server. Not “brew install ollama and you’re done.” More like “brew install ollama, fight your network for three hours, debug benchmark harness configs, discover your ISP blocks half the internet, and eventually get numbers you half-trust.”

Worth it? Yeah. Having 113 tok/s inference running locally, with no API costs and no rate limits, is genuinely useful. But it’s not a five-minute setup.